Editorial: The Role AI Response Pattern Analysis Plays in Content Strategy

AI search tools do not behave like Googleʼs traditional results page. You do not get a stable ranking where position three stays position three. Large language models generate fresh answers each time, pulling from different sources and structuring responses differently. That makes simple tracking like “Did our brand show up?” unreliable.

Instead of counting mentions, the smarter move is to study how answers are built. When you ask AI tools about your category, they tend to follow certain patterns.

They might list top tools, compare features in a table, outline a step-by-step framework, or emphasize themes like pricing transparency or security. Over time, these structures repeat. That repetition tells you what the model thinks matters in your category.

Most companies already have a lot of content. The real gap is understanding how AI systems frame the category. If every AI answer highlights implementation steps and customer support depth, but your content focuses only on features, there is a mismatch. If certain competitors keep appearing across different tools, that signals stronger visibility in the training and citation ecosystem.

You do not need advanced software to start. Pick a handful of priority topics. Run variations of the same prompt across tools like ChatGPT, Gemini, or Perplexity.

Log the outputs in a spreadsheet. Track which brands appear, what themes are emphasized, and how answers are structured. After enough repetitions, patterns become obvious.

This method is not perfect. AI outputs change. Models update. Live search access varies. And none of this replaces traditional performance metrics like traffic, rankings, or conversions. Think of it as a layer of market intelligence, not a replacement for analytics.

The practical takeaway is to stop treating AI visibility like a leaderboard. Treat it like qualitative research. Study recurring answer structures and themes, then adjust your content so it fits the logic AI systems repeatedly use when explaining your category.

Spacebar Studios will handle your newsletter setup for free — from ICP refinement to template design and sample drafts. After month one, we officially hit the ground running.

Case Study: How Condition 1 Achieved 78% List Growth to Fuel a 680% Revenue Explosion

Condition 1 is a Texas-based manufacturer of American-made rugged protective cases for firearms, cameras, and outdoor gear. Despite having a premium product and a loyal niche following, the brand struggled with inconsistent holiday performance. Their seasonal campaigns were fragmented, reaching only small segments of their audience and failing to convert passive subscribers into active buyers.

Instead of relying on basic batch-and-blast tactics, the team initiated a 10-month strategic overhaul to transform their email and SMS channels into a high-growth acquisition engine. The primary focus was building a massive, warm audience well in advance of the 2025 holiday season. They moved away from narrow targeting and implemented a comprehensive integration strategy where every digital touchpoint, from social ads to site pop-ups, was optimized for high-intent lead capture.

The growth was driven by a sophisticated two-step approach to list building and nurturing. By A/B testing sign-up incentives and aggressively scaling their capture forms, the brand ensured they weren't just collecting emails, but identifying high-value customers interested in specific product lines. They synchronized their Welcome and Abandonment flows to reflect seasonal offers, ensuring that any new lead entered a high-conversion environment immediately upon joining the list.

The strategy culminated in a massive expansion of their owned audience, which provided the necessary leverage for their Black Friday/Cyber Monday (BFCM) execution. By the time the 2025 holiday window opened, the brand had nearly doubled its reachable audience. They then utilized data-driven resends of top- performing content and shifted their messaging from simple product features to gift-focused positioning, tapping into the peak seasonal shopping mindset.

The results of this audience-first approach were transformative. The email list grew by 78% year-over-year to 240,000 subscribers, while the SMS list surged by 124% to 85,000. This expanded reach directly translated into a 464% increase in Black Friday revenue and a 527% jump in Cyber Monday sales compared to the previous year. Most notably, 86% of the nearly 14,000 orders came from new customers acquired through these optimized growth funnels.

Play of the Week: A/B Testing Roadmaps That Actually Improve Revenue

Most email and lifecycle programs stall because they test without a system. Klaviyo explains that the issue is usually a lack of testing discipline. Brands often run experiments without defining success, change too many variables at once, and end up with results they cannot trust.

The better approach is to treat testing like a process. Brainstorm broadly, define one KPI to decide the winner, prioritize by impact and effort, isolate a single variable, document tests outside the platform, and repeat proven wins before scaling them everywhere.

Define the winner before launch

High-performing teams set one clear success metric before testing. Without a defined KPI, results can be debated after the fact. The fix is to assign a single outcome like revenue, click rate, repeat orders, average order value, or unsubscribes, so decisions stay fast and consistent.

Test one variable

The clearest rule is to isolate one change at a time. If you are testing send time, then everything else must stay the same. Changing multiple variables at once breaks attribution. You may see results move, but you cannot reliably explain what caused them.

Prioritize for impact and feasibility

Not every test needs immediate focus. Rank ideas by impact, effort, and overlap, then start with high-impact, low-effort opportunities. Timing also matters, since some tests fit seasonal windows or major launches, so they should be planned into the calendar instead of squeezed in reactively.

Document outside the platform so learning compounds

Keep a simple spreadsheet for tests, even if the platform has reporting. Logging the hypothesis, chosen KPI, audience, duration, seasonality notes, final decision, and key learnings turns isolated experiments into lasting knowledge.

Repeat before you scale

A single win is not a universal rule. Results for new subscribers may not work for loyal customers, and what succeeds in a campaign may fail in a flow. The right habit is to validate tests across segments, buying stages, and campaign types before making them default.

Inbox competition is tighter than ever, small inefficiencies add up quickly, and undisciplined testing creates motion without real learning. A clear A/B roadmap gives each test a defined purpose, turns results into reusable knowledge, and makes growth more predictable over time.

Metric Benchmark

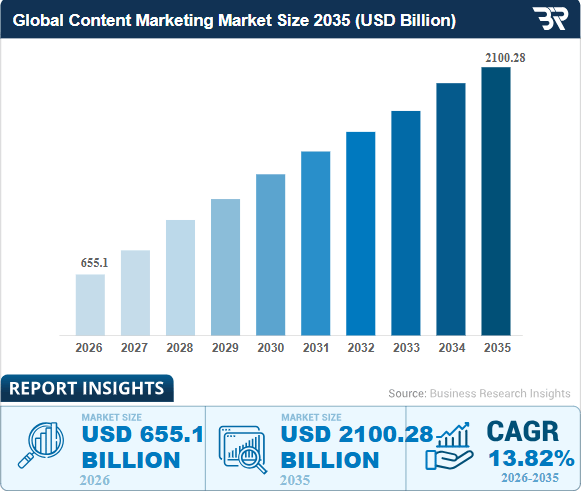

Source: Business Research Insights

Closing Note

Whether you are decoding AI response patterns to align with machine logic or scaling an email list to double your reachable audience, the goal is to shift from campaign mode into a system mode that eliminates guesswork. As evidenced by Condition 1’s revenue jump, success in a high-CAC environment belongs to the builders who treat every digital touchpoint as qualitative research for their next automated growth engine.

See you next week.

📣 Forward or Reply

If you liked this edition of Growth Curve, forward it to a founder who needs to stop renting audience — and start owning it.